Artificial intelligence is now embedded at the heart of organisational decision-making, shaping outcomes in finance, recruitment, healthcare, and public administration. As its influence expands, concerns around bias, opacity, security breaches, and accountability have become impossible to ignore. These realities underline a clear truth: technical performance alone is no longer enough. AI must be governed with structure and intent in real-world settings.

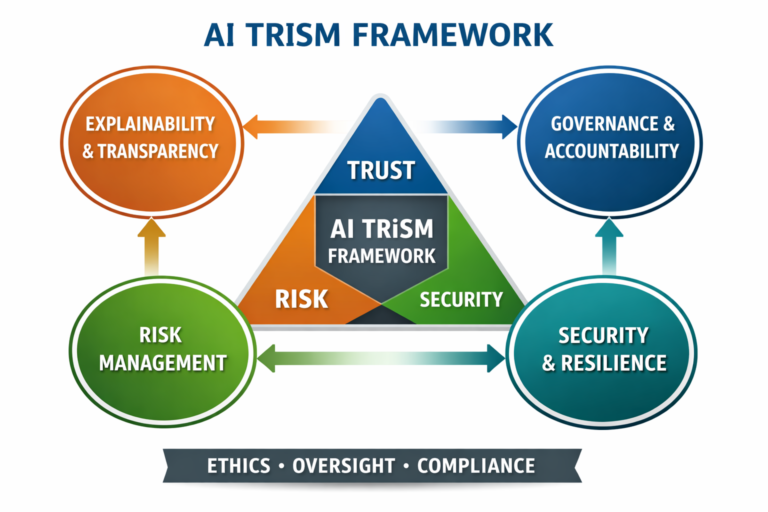

This imperative has accelerated the adoption of the AI Trust, Risk and Security Management framework, most prominently advanced by Gartner. AI TRiSM unifies ethics, risk management, and security within a single operational model, allowing organisations to scale AI responsibly while preserving trust, mitigating risk, and ensuring systems remain reliable and socially acceptable over time.

Concept

AI TRiSM stands for Artificial Intelligence Trust, Risk and Security Management. It is a governance framework rather than a product or a regulatory tool, setting out how organisations design, deploy, monitor, and eventually retire AI systems while balancing innovation with responsibility.

Conventional IT governance assumes systems remain largely stable and predictable after deployment. AI challenges this assumption. Machine learning models evolve with new data, operate in complex environments, and can generate outcomes that are difficult even for their creators to explain. AI TRiSM addresses this challenge by embedding oversight, transparency, and risk controls across the entire AI lifecycle, from data sourcing and model development to deployment and continuous monitoring in real-world use.

The inextricable link between Trust, Risk, and Security

Trust, risk, and security are often treated as separate concerns, but in practice, they are closely interconnected. Trust reflects the confidence that users, regulators, and society place in AI systems, and it depends on fairness, transparency, reliability, and accountability. Risk relates to the potential for harm, whether financial, legal, ethical, or societal. Security, in turn, focuses on protecting AI systems from misuse, manipulation, and malicious attack.

An AI system can be technically secure yet still untrustworthy if it delivers biased or unfair outcomes. Another may be transparent and explainable, but exposed to data poisoning or model theft, creating significant operational risk. AI TRiSM is grounded in the understanding that trust, risk, and security reinforce one another and must be managed together as an integrated whole, rather than through disconnected or siloed initiatives.

Principles of Governance and Accountability

Governance sits at the centre of the AI TRiSM framework. It establishes who is responsible for AI-driven decisions, how those decisions are reviewed, and what happens when systems fail. Effective AI governance typically involves clear policies on acceptable use, formal approval processes for high-impact models, and defined escalation paths for incidents.

In practical terms, governance ensures that AI systems align with organisational values, legal obligations, and societal expectations. It also clarifies accountability, addressing a common weakness in AI deployments where responsibility is diffused across technical and business teams. Without this clarity, trust quickly erodes when problems arise.

Explainability and Transparency in Decision Making

Explainability is one of the most prominent pillars of AI TRiSM. It refers to the ability to understand and clearly communicate how an AI system produces its outputs. This is especially critical in regulated or high-impact sectors such as finance, healthcare, and public administration, where automated decisions can have significant consequences for individuals.

AI TRiSM promotes the use of explainable AI techniques, robust documentation, and clear communication with users and stakeholders. Transparency does not mean revealing proprietary algorithms. Instead, it involves providing meaningful explanations that enable decisions to be scrutinised, challenged, and improved. This capacity for explanation is essential to building and sustaining trust among regulators, users, and the wider public.

Risk Management Across the AI Lifecycle

Risk management within AI TRiSM extends far beyond initial deployment. AI systems can degrade over time as data patterns shift or as they are exposed to new contexts. Bias can emerge where none existed before, and performance can decline without an obvious warning.

For this reason, AI TRiSM emphasises continuous monitoring and periodic reassessment. Models are evaluated not only for accuracy, but also for fairness, robustness, and unintended consequences. This lifecycle approach marks a departure from traditional risk models and reflects the dynamic nature of AI technologies.

Security and Resilience in AI Systems

Security within the AI TRiSM framework addresses threats that are specific to artificial intelligence. These include data poisoning, where training data is deliberately manipulated, adversarial attacks that exploit model weaknesses, and unauthorised access to models or outputs.

AI TRiSM integrates cybersecurity controls tailored to these risks, including strict data governance, model access controls, and adversarial testing. Equally important is resilience. Systems must be designed with fallback mechanisms and human oversight to prevent failures from resulting in disproportionate harm.

AI TRiSM and Its Application

In operational terms, implementing AI TRiSM usually begins with an inventory of all AI use cases within an organisation. Each use case is assessed based on its potential impact, regulatory exposure, and risk profile. High-impact systems are subject to more rigorous controls, including enhanced explainability, frequent audits, and senior-level oversight.

Policies and standards guide how data is collected, how models are trained, and how results are validated. Technical tools support these policies by enabling monitoring, logging, and security testing. Training and organisational culture are also critical, ensuring that staff understand both the capabilities and limitations of AI.

Functional Benefits of AI TRiSM

In financial services, AI TRiSM supports the responsible deployment of credit scoring, fraud detection, and automated compliance systems. By ensuring explainability and fairness, it helps institutions meet regulatory expectations while maintaining customer trust. In healthcare, it governs diagnostic and predictive models, where transparency and accuracy are essential for patient safety.

In the public sector, AI TRiSM provides a framework for managing systems used in taxation, social welfare, identity verification, and security. Its strongest functionality lies in reducing operational and reputational risk while enabling organisations to scale AI solutions with confidence.

Global View on AI Trust and Risk Management

Globally, AI TRiSM aligns with a growing body of standards and regulatory initiatives. The AI Risk Management Framework developed by the National Institute of Standards and Technology offers structured guidance on identifying and managing AI risks, while the International Organisation for Standardisation has introduced standards focused on AI governance, quality, and risk control.

In Europe, the proposed EU AI Act takes a risk-based regulatory approach, placing higher obligations on AI systems with significant societal impact. Although AI TRiSM is not itself a regulation, it provides organisations with a practical framework for translating these diverse regulatory expectations into consistent, operational practice across jurisdictions.

Africa and the Unique Challenges

Implementing AI TRiSM is not without difficulty. Many organisations on the continent face constraints related to technical capacity, data quality, and funding. Continuous monitoring and advanced explainability tools may be challenging to deploy in resource-constrained environments.

There are also institutional challenges. AI governance is still an emerging discipline, and public awareness of algorithmic risk remains limited. In the absence of detailed national AI legislation, organisations may struggle to determine appropriate standards and accountability mechanisms.

Changing for Impact

For AI TRiSM to realise its full value, organisations must recognise AI governance as a strategic priority rather than a purely technical concern. Clearer, context-aware regulatory guidance can reinforce this shift, while academic institutions and professional bodies can strengthen capacity by embedding AI ethics, risk management, and security into education and training.

Leadership commitment is equally critical. Trustworthy AI is shaped as much by organisational culture as by tools or policies. Transparency, accountability, and respect for human rights must be treated as core operational principles, not optional add-ons.

A Broad View and Summary

AI TRiSM reflects a more mature view of artificial intelligence as a socio-technical system that combines technology with human, organisational, and societal factors. It recognises AI’s potential while emphasising the responsibility to manage trust, risk, and security through practical, everyday governance rather than abstract principles.

At a time when confidence in digital systems is fragile, AI TRiSM provides organisations with a structured way to ensure AI supports human goals. It offers a realistic and sustainable approach to responsible AI adoption, particularly in complex regulatory, cultural, and economic environments.

Senior Reporter/Editor

Bio: Ugochukwu is a freelance journalist and Editor at AIbase.ng, with a strong professional focus on investigative reporting. He holds a degree in Mass Communication and brings extensive experience in news gathering, reporting, and editorial writing. With over a decade of active engagement across diverse news outlets, he contributes in-depth analytical, practical, and expository articles exploring artificial intelligence and its real-world impact. His seasoned newsroom experience and well-established information networks provide AIbase.ng with credible, timely, and high-quality coverage of emerging AI developments.